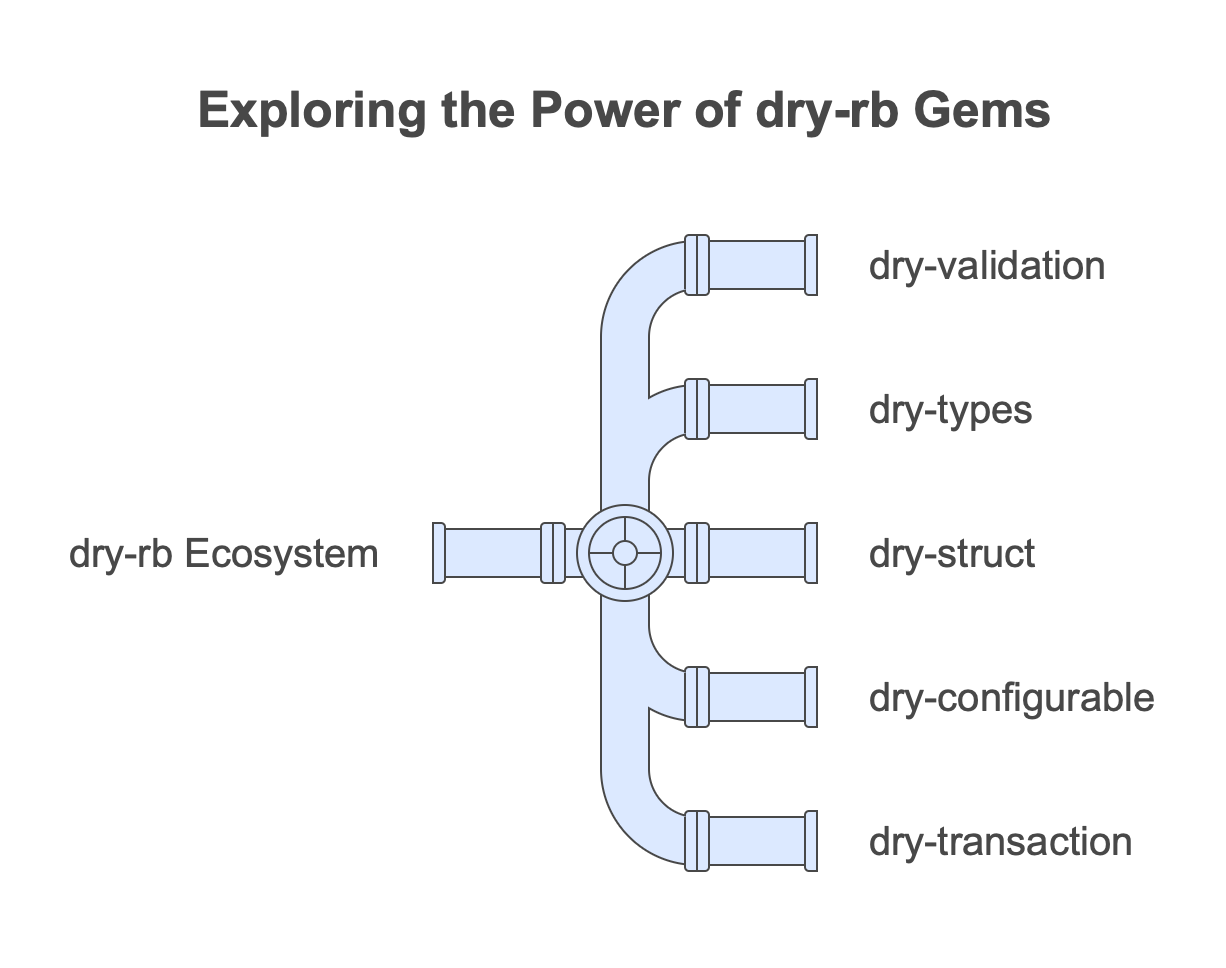

In this post, we’ll dive into five of my favorite gems from the dry-rb family:

- dry-validation: For handling strong parameters, form validation, and more.

- dry-types: A toolkit for defining and enforcing data types.

- dry-struct: For creating immutable objects with strict typing.

- dry-configurable: Simplifies application and library configuration.

- dry-transaction: A framework for building business logic with clear, composable steps.

Let’s break down each gem and see how they can elevate your Ruby projects.

1. Dry Validation: Robust Input Handling and Validation

When building apps, validating inputs can quickly become messy. Enter

dry-validation. It provides a powerful, declarative way to validate:

- Strong parameters in controllers

- Form inputs for user-facing applications

- GET params, JSON documents, or ENV configuration

Example: Form Validation

require "dry-validation"

UserSchema = Dry::Schema.Params do

required(:name).filled(:string)

required(:email).filled(:string, format?: /\A.+@.+\..+\z/)

optional(:age).maybe(:integer, gt?: 18)

end

input = { name: "Nico", email: "nico@example.com", age: 20 }

result = UserSchema.call(input)

if result.success?

puts "Valid input: #{result.to_h}"

else

puts "Errors: #{result.errors.to_h}"

end

This level of clarity and flexibility beats hand-rolled validation logic any day.

2. Dry Types: Enforce Data Integrity Everywhere

With dry-types, you can define custom data types to ensure that your program

handles data consistently. This becomes invaluable for type safety in a

dynamically-typed language like Ruby.

Example: Custom Types

require "dry-types"

module Types

include Dry.Types()

Email = Types::String.constrained(format: /\A.+@.+\..+\z/)

Age = Types::Integer.constrained(gt: 18)

end

puts Types::Email["nico@example.com"] # => "nico@example.com"

puts Types::Age[20] # => 20

# Types::Age[15] # => Raises Dry::Types::ConstraintError

Using these custom types ensures your objects always contain valid data.

3. Dry Struct: Immutable, Typed Objects

Need to build domain models or value objects with strict typing and

immutability? dry-struct is your tool of choice. It combines the power of

dry-types with a structure that enforces clean, predictable data modeling.

Example: User Struct

require "dry-struct"

module Types

include Dry.Types()

end

class User < Dry::Struct

attribute :name, Types::String

attribute :email, Types::String

attribute :age, Types::Integer.optional

end

user = User.new(name: "Nico", email: "nico@example.com", age: 30)

puts user.name # => "Nico"

# user.name = "Bob" # => Raises FrozenError (immutable by default)

By enforcing immutability, dry-struct helps you avoid hard-to-debug state

mutations.

4. Dry Configurable: Simplify Configuration Management

Managing configuration is a common pain point in apps. With dry-configurable,

you can define clear, flexible settings for your application or library.

Example: Application Configuration

require "dry-configurable"

class AppConfig

extend Dry::Configurable

setting :database_url, "postgres://localhost/mydb"

setting :log_level, :info

end

AppConfig.configure do |config|

config.database_url = "postgres://localhost/production"

config.log_level = :debug

end

puts AppConfig.config.database_url # => "postgres://localhost/production"

puts AppConfig.config.log_level # => :debug

It’s intuitive, thread-safe, and integrates seamlessly with other gems.

5. Dry Transaction: Composable Business Logic

dry-transaction is a clean, composable way to build workflows or business

logic pipelines. It helps you structure code into discrete, reusable steps with

automatic error handling.

Example: User Signup Pipeline

require "dry-transaction"

class SignupTransaction

include Dry::Transaction

step :validate

step :persist

step :send_welcome_email

def validate(input)

if input[:email] =~ /\A.+@.+\..+\z/

Success(input)

else

Failure("Invalid email")

end

end

def persist(input)

# Simulate saving to DB

input[:id] = 1

Success(input)

rescue

Failure("Failed to persist user")

end

def send_welcome_email(input)

# Simulate sending email

puts "Welcome email sent to #{input[:email]}"

Success(input)

end

end

transaction = SignupTransaction.new

result = transaction.call(email: "user@example.com")

if result.success?

puts "User signup successful: #{result.value!}"

else

puts "Signup failed: #{result.failure}"

end

This makes complex workflows easier to reason about and test.

Why dry-rb?

The dry-rb ecosystem encourages better practices in Ruby development by focusing on:

- Immutability: Reduces bugs caused by unexpected state changes.

- Explicitness: Code that’s easier to read and understand.

- Modularity: Gems that do one thing and do it well.

- Type safety: A better way to ensure data integrity.

If you’re serious about improving your Ruby projects, give these gems a try. Start small by introducing one or two gems into your stack and see the difference they make.

What’s your experience with dry-rb? Share your thoughts or favorite use cases in the mailing-list!

]]>